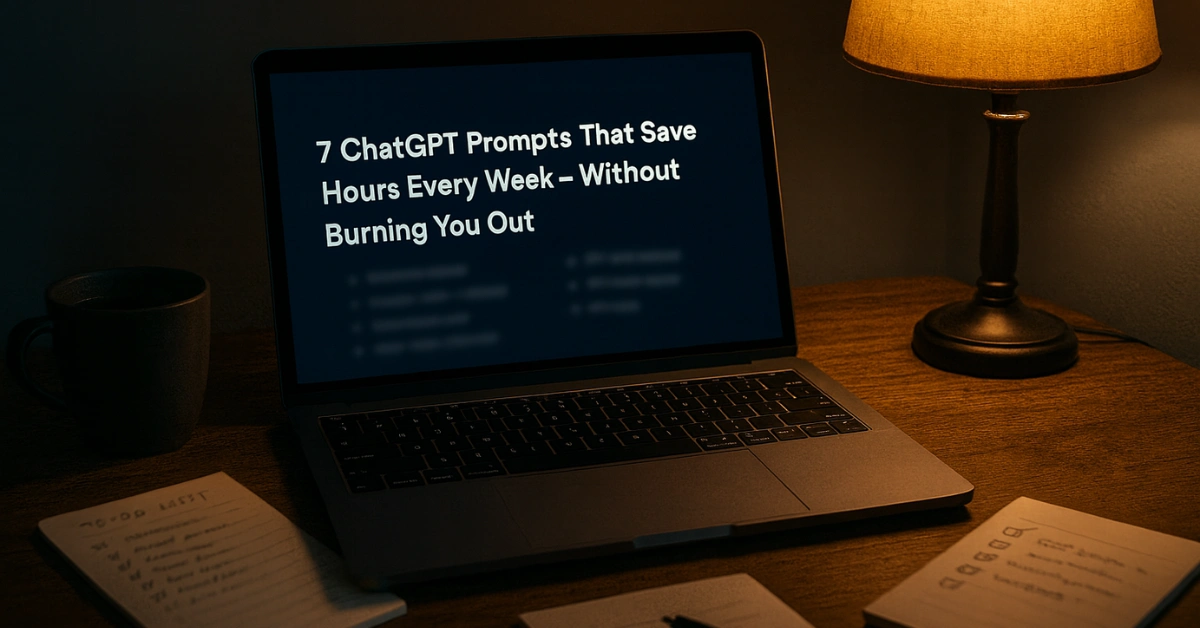

The Five-Drop Illusion

Google just dropped a number that sounds almost magical: a single Gemini text prompt uses 0.24 watt-hours of electricity, 0.03 grams of CO₂, and about five drops of water. That’s smaller than charging your phone for a minute, smaller than the sweat you lose climbing one flight of stairs. In other words, a footprint so harmless you’d laugh it off.

But here’s the twist — those drops don’t vanish. Stack them across billions of prompts fired off daily and suddenly you’re not sipping water, you’re standing in a flood. It’s the perfect PR sleight of hand: shrink the story to one prompt so the numbers look microscopic, while the real damage builds quietly in the background.

The Magic Math Behind Google’s Tiny Number

Dig into the fine print and the math starts to wobble. Google’s calculation only covers text queries inside Gemini Apps. Not images. Not video. Not the monster costs of training a giant model. Just the featherweight stuff.

Even the carbon side is polished for show. Google counts emissions using a “market-based” method — which means if they’ve bought enough renewable credits on paper, the grid looks greener than it really is. That makes the math sparkle, but it hides the coal plants that may still be powering the very servers running your prompt. Tiny per prompt? Sure. Honest in reality? That’s another story.

The Critics Who Call Out the Green Gloss

Not everyone is clapping for Google’s five-drop miracle. Researchers like Shaolei Ren and Alex de Vries have already poked holes in the shine. Their main complaint? Google’s numbers look too good because they’re massaged with accounting tricks. Market-based carbon math makes emissions seem lower in places where Google buys renewable credits, even if the actual servers are still slurping power from coal-heavy grids.

Then there’s water. Google says a prompt drinks less than a teaspoon, but critics argue the company isn’t counting the “hidden water” used upstream in energy generation. If your data center sits in a desert, those missing liters matter. Add in the fact that the report hasn’t gone through peer review, and what you’re left with feels less like a scientific truth and more like a marketing pitch dressed in decimals.

When Prompts Multiply Into Power Plants

One prompt might be tiny. A billion prompts a day? That’s a different beast. This is where the Jevons Paradox comes alive: the cheaper and cleaner something looks, the more we gorge on it. And AI prompts are free candy — addictive, endless, unstoppable.

The International Energy Agency is already warning that data center electricity demand could nearly double by 2030, with AI as the main driver. In parts of the U.S., grid operators are scrambling so hard to keep up that some data centers are being forced to build their own gas-powered plants just to stay online. That’s the real math Google’s story doesn’t highlight: the leap from drops to floods, from harmless queries to power-hungry infrastructure that bends entire grids.

Google’s Big Picture Isn’t So Green

Here’s the contradiction Google can’t escape. While it celebrates efficiency gains per prompt, its total emissions have ballooned by more than 50% since 2019. That’s the company’s own admission. AI isn’t the only culprit, but it’s now one of the fastest-growing pieces of that puzzle.

Yes, Google has big promises. It talks about running entirely on clean energy, replenishing billions of gallons of water, and hitting bold 2030 climate goals. These efforts are real. But they’re being outpaced by demand. Think of it like bailing water from a leaking boat: you’re working hard, but the flood is rising faster than you can scoop it out.

The Real Cost of Your Prompts

So where does this leave you, the everyday AI user? Let’s put it in terms that make sense. Firing off 100 text prompts equals about as much energy as keeping a small LED bulb on for a couple of hours. Pretty harmless, right?

But scale that to millions of people, doing the same thing every single day, and suddenly you’re looking at city-level electricity bills. Those “five harmless drops” turn into Olympic-sized swimming pools. That’s the tension Google’s narrative skips — the difference between your footprint and our footprint. One feels invisible, the other is impossible to ignore.

How to Use AI Without Wasting the Planet

You can’t single-handedly fix Google’s grid problem, but you’re not powerless either. The first step is awareness: don’t treat prompts like disposable tissue. Think before you type, batch your questions, and avoid endless retries just because it feels free. Every extra spin of the server eats power somewhere.

On the industry side, companies are starting to route workloads to data centers when renewable energy is peaking. Users don’t always see that, but you can support tools and services that prioritize efficiency. And if you’re a heavy user, experiment with lighter models for everyday tasks. Cleaner AI isn’t just about corporate pledges — it’s also about smarter habits on our screens.

The Flood Ahead

Google’s “five drops” story is a neat headline, but it misses the bigger picture. AI doesn’t live in single prompts, it lives in billions. And those billions are already rewriting energy forecasts, straining power grids, and testing the planet’s limits.

The irony is sharp. Efficiency makes each prompt look tiny, but efficiency also fuels demand. The more harmless AI feels, the more we’ll use it, and the more invisible damage stacks up. That’s the paradox Google didn’t put in its press release. The future of AI won’t be measured in drops. It will be measured in floods.

FAQs:

How much energy does one AI prompt use?

According to Google, a Gemini text prompt uses about 0.24 Wh of energy, equal to running a 10W LED bulb for less than two minutes.

What is the carbon footprint of an AI prompt?

Google reports 0.03 g CO₂ per Gemini text prompt, though experts argue local grid emissions may be higher.

Does AI use water?

Yes. Google says one text prompt consumes 0.26 mL of water, but indirect water from electricity generation is not included.

Why are Google’s AI sustainability numbers controversial?

Because they use market-based accounting and omit hidden water and training costs, making the footprint look smaller than reality.

Will AI increase global electricity demand?

Yes. The IEA predicts data center demand could nearly double by 2030, with AI a major driver of growth.